How the sound design of Blackbox was created to complement and guide its unique gameplay.

When developer Ryan McLeod devised the abstract puzzle iOS app Blackbox, he began on the principle that all challenges be solvable without touching the screen.

The result was much more than that: a collection of over 50 artful, minimal puzzles – equal parts baffling, addicting, and satisfying – utilizing basically every sensor and parameter available on an iPhone other than touch. But one year and over 3.5 million downloads later, McLeod saw further opportunity in the realms of sound and iOS’s powerful Accessibility settings.

By introducing new sonic interfaces paired with careful haptic feedback and countless accessibility enhancements, the resulting update brought the game to a whole new level. Now featuring over 70 puzzles (and growing), and fully accessible to nearly any user regardless of vision or ability, Blackbox became a recipient of the 2017 Apple Design Awards.

To create the sonic interface for the new update, McLeod enlisted sound designer Gus Callahan who took this on as his first game audio project. We sat down with Callahan to discuss his approach and the challenges he faced creating the sound for Blackbox.

Note: We've omitted videos of gameplay examples to avoid spoilers, so you'll just have to download Blackbox to experience for yourself :)

Music from teaser video not created by Callahan

PSE: What was your inspiration for the sound design?

Gus Callahan: I was going for something between sleek and cute, though I don’t really think Ryan wanted the cute. Some of those animations are just so cute, it’s like, how can I not go there?

But we both wanted it to be sleek and simple to match the game’s minimal visual aesthetic.

There’s a great video of BMW sound designers that kept coming to mind when I was conceptualizing the direction. They’re very simple sounds…spectral sounds, sine tones, clicks. I kept everything in the key of C to keep a general harmony across the whole game.

Everything uses the same scales – stuff like that helps keep it cohesive throughout.

Gus Callahan

-

What audio tools did you rely on the most?

I was mainly working with AudioKit which is an audio framework for iOS. It’s an extensive library of audio tools for the development environment Xcode that integrates programming languages like Swift and CSound. It's just line-by-line programming, and it has all these modular building blocks you can chain together like oscillators, filters, reverbs, effects, LFOs, amplitude modulation, frequency modulation, etc.

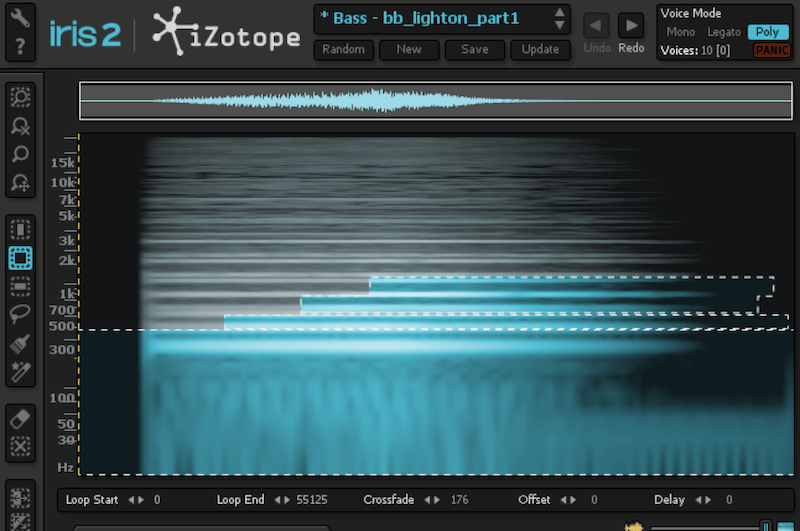

Then some assets we implemented were things I put together in Ableton by sampling synthesizers or pulling sounds from the Pro Sound Effects Online Library to process using iZotope Iris, for example.

What was your collaboration process like with the developer, Ryan McCleod?

Ryan is an incredible programmer, so I really learned a lot about the code aspects from him. It was a fun challenge trying to communicate exactly what should be happening and translating from synthesis to coding language. I would build up synthesizers in AudioKit and have sliders modulate the sound corresponding to the gameplay. Then Ryan would do the implementation. Since everything would mirror the animations he already created, a lot of times he could just pull from animation changes.

It was definitely challenging to add this whole layer, and AudioKit is still evolving. The developer from AudioKit, Aure, was incredibly helpful and working with us a lot of the time, actually.

Ryan McLeod (left) listening to one of Gus' creations

What was the first sound asset you created?

The first sound that we intended to make was the “light on” sound you hear when you complete a challenge. But it ended up being the very last sound we finished. Since it’s the sound you hear the most, everything else really had to inform the way it came out.

The sound is sampled from a synthesizer, played as a 5th, and processed using Iris (screenshot above). Here it is before and after spectral processing in Iris which really simplified it and added motion to the sound to pair perfectly with the animation:

Tell me about the implementation of accessibility settings.

The idea was to make the game playable for anyone regardless of ability by tapping into the accessibility functions on the iPhone. The settings are incredibly deep, ranging from intuitive hotkeys, to big gestural sweeps, to controlling everything solely with eye movements.

The challenge was to make the game completely accessible while retaining the ambiguity of the puzzles using clever text from Ryan plus sounds that mirror the gameplay. All without giving away too much. We also connected with accessibility users to help beta test challenges, and we got a lot of great resources and info from The A11y Project which is a growing roadmap for programming and accessibility. That gave us a lot of insight.

“Don’t go down a sound design rabbit hole and overcomplicate the process... Consistency is more important to the end result.”

What’s a major challenge you faced, especially with this being your first game audio project?

Accessibility control often ducks out regular audio, so working with and around that was difficult. A lot of challenge parameters we were coding with would often break the audio engine, for example (spoiler alert) using phone calls, the silent switch, or volume controls as puzzle pieces. The way we used Audio Kit was sort of unconventional - it’s mainly been used for synth apps, drum machines, stuff like that - so getting it to do what we wanted took more work.

Compressing everything to get it the right size was challenging as well – I had to keep down sampling. It was also tough making the sound consistent across devices and dealing with the range of different speakers. Compared to the iPhone 4s, for example, the iPhone 7 is way louder, clips more easily, and has stereo. And then some people are using headphones, so you can’t completely destroy stuff and have it sound horrible for them.

Sonic interface development

What’s a lesson you learned that you’ll take into account for your next project?

Don’t go down a sound design rabbit hole and overcomplicate the process. When I fell into that trap it inevitably sounded more electro-acoustic which was just the wrong vibe entirely. It was too much recording and processing to make it try and sound good. Keeping it simple and direct was always the way to go.

Having a library is so important to help keep an overall unity across all the sounds. Making each individual sound from the ground up is fun, but it leaves you with all these disparate pieces - even if it sounds cool. Consistency is more important to the end result.

Follow Gus Callahan: Website

Follow Ryan McCleod: Twitter

Learn more about Blackbox: Website | Cool review